AI-based Database Image Classification with Altova MapForce

One of the most common examples of AI in our everyday lives is facial recognition. Facial recognition is the process of identifying or verifying a person’s identity based on their face. Facial recognition is used in many applications, such as unlocking our phones with FaceID, tagging our friends on social media platforms like Facebook, and checking in at airports or hotels with biometric scanners. Facial recognition can make our lives more convenient and secure, but it can also raise some privacy and ethical concerns. For instance, how can we ensure that our facial data is not misused or stolen by hackers or malicious actors? How can we prevent facial recognition from being used for surveillance or discrimination? How can we ensure that facial recognition is accurate and fair, and does not have any biases or errors?

The paragraph above was generated by ChatGPT in response to my request to describe the benefits and risks of artificial intelligence and include a real-life example. It’s interesting that ChatGPT chose FaceID as the example, since FaceID is simply one variation of image analysis and AI-powered image classification offers potential to automate many real-world tasks.

One common use-case is a product catalog, wherein a company manages product information provided by many different manufacturers. A product loaded into that database may have a name that does not necessarily include a precise description of the item. For instance, wellington is a boot, fedora is a hat, a mongoose is a bicycle, and a yellow watermelon shiny needlefish is a fishing lure. We can make use of AI-powered image classification using the Microsoft Azure Cognitive Services Computer Vision API to address this problem. The Computer Vision Service takes the image data or URL as its input and returns information about the content. One service generates image classification tags based on a training set of recognizable objects, living beings, scenery, and actions that the Azure AI has been trained on. These tags allow us to categorize products in the database accordingly and may even correspond to search terms a user might provide to find products in the catalog.

We can create an AI-based data mapping using Altova MapForce to send product images to the Computer Vision AI using its web service API. Altova MapForce is an award-winning, graphical data mapping tool for any-to-any data conversion and integration. The Computer Vision API uses artificial intelligence to analyze each image and return a list of tags. MapForce supports Web services directly within a mapping, and intermediate result processing or chained data mapping functionality can then insert the tags back into a dedicated field in the database for each product.

This AI-based data mapping can automate generation and insertion of AI generated tags for product catalogs in a completely scalable manner. The tags being returned by the AI are given with a confidence score, making it easy to set a threshold in the mapping to only use tags with a significantly high enough confidence.

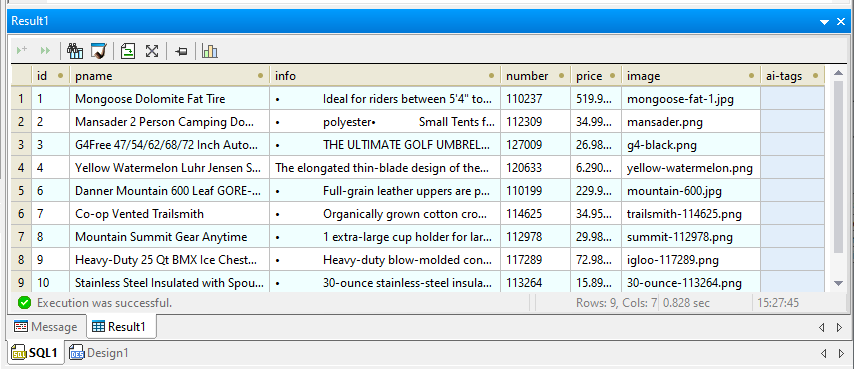

We started with a small sample database of common outdoor recreation products and used Altova DatabaseSpy to add a field to the products table for AI tags. DatabaseSpy connects to all major databases, easing SQL editing, database structure design, content editing, and database conversion for a fraction of the cost of single-database solutions. Here is a DatabaseSpy query result window showing database content and the new empty field.

And here in random order are thumbnail versions of the example product images:

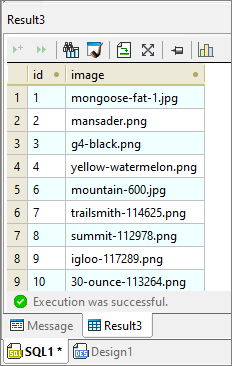

We also used DatabaseSpy to validate a simple SQL query to retrieve just the id, which is the table primary key, and the corresponding image filename.

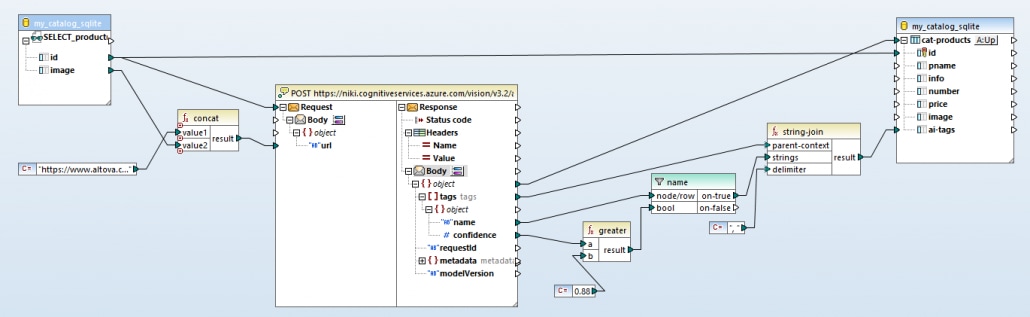

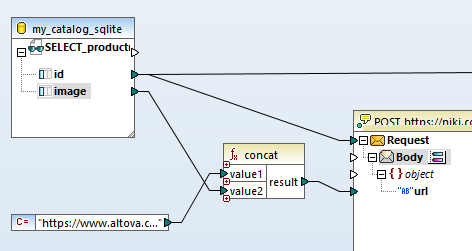

The SQL query is the first input for the MapForce AI-based data mapping with three main components, the query result, the Azure Image Analysis API request, and a SQL script to insert the API responses back into the database.

Let’s look at the request to the artificial intelligence API in more detail:

SQL Query Result as a Data Mapping Input Source

The Select query generates a table of data, essentially a list of key numbers and corresponding image file names, but the API only accepts requests for one image at a time. By mapping the id from the query to the envelope at the top of the API component, we generate a new API call for each id returned, iterating through each image in the list. The other connection line from the id exiting the image at the top right will be used later to build a SQL update script.

The designer of the database table chose to reference the product images by file names rather than include binary images as BLOB objects in the database. The artificial intelligence image analysis API can either accept raw image data or a URL to a publicly accessible image, so we can simply provide the image URL to the API in our request. The concat function in the center above adds the path to build each complete URL.

Executing the AI Web Service Request

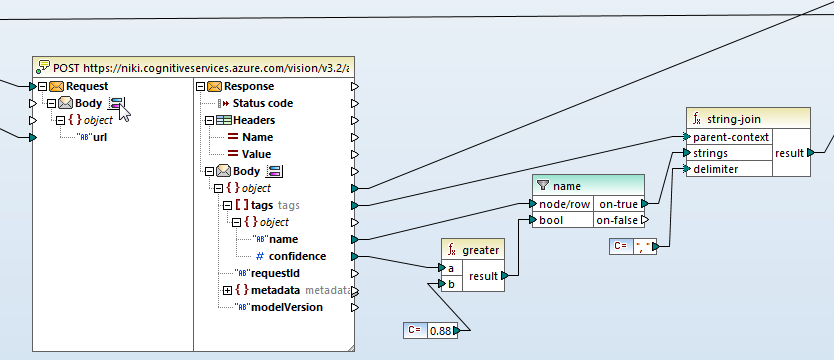

The center portion of the AI-based data mapping shows the Web service function that calls the artificial intelligence image analysis API and further processing of the results returned:

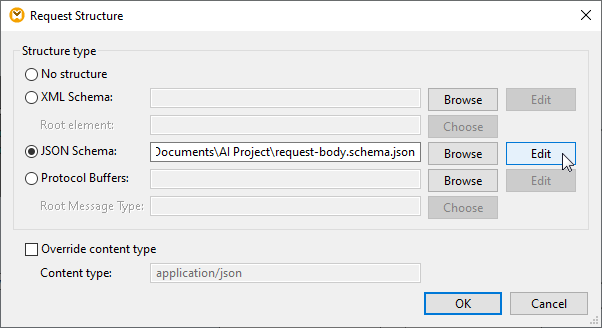

Note the envelope icon labeled Body on both the Request and Response sides of the web service function. The API requires a JSON object as the Request body and specifies the Response will be a JSON document. The blue and red buttons next to the Body icons open a dialog to provide the structure definitions for the Request and Response.

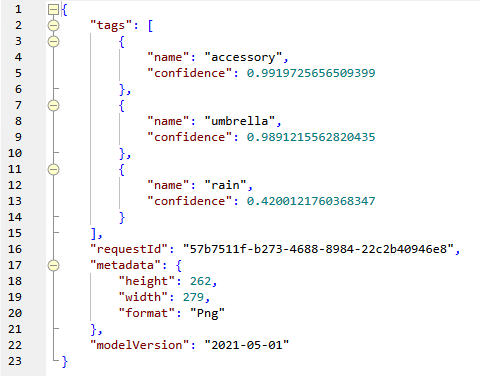

The response from the artificial intelligence API needs further processing. Tags are returned as lists of JSON objects and each tag is accompanied by a number that represents accuracy confidence as a percentage. Shown below is one example. This is the AI response in original JSON format for the umbrella image:

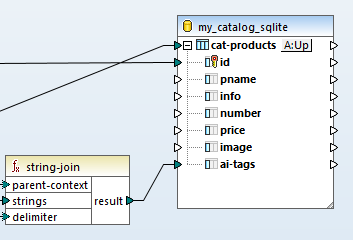

The greater function filters the response to accept tags with an 88 percent or greater confidence level. Next, the string join function combines the tags into a comma-separated string. Note the “rain” tag above shows 42% confidence.

And here is the string we want to apply to the umbrella product in the database after extracting only the tags we want:

Generating a SQL Update from the AI Responses

The right-side portion of the AI-based data mapping receives each processed result, correlates the result with the correct database id index, and maps the string of tags into the ai-tags field of the cat-products database table.

Examining Results of the AI-Based Data Mapping

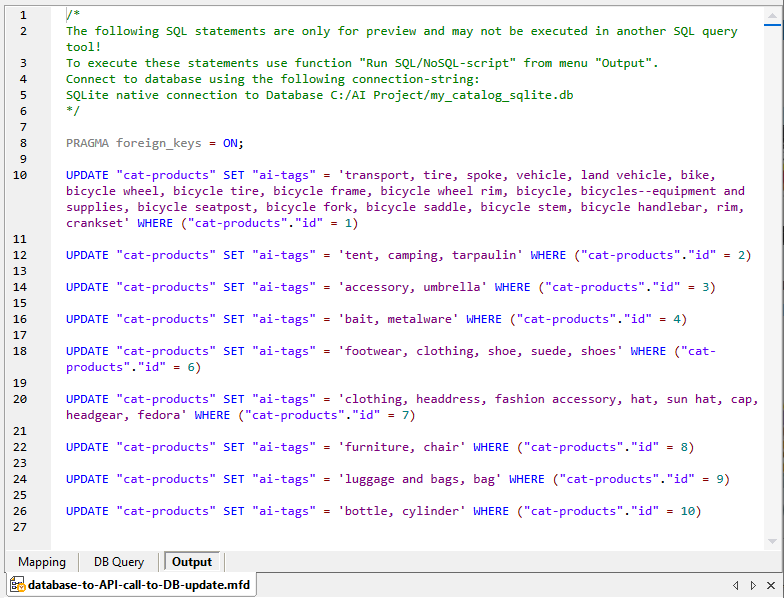

The Output preview button below the MapForce main mapping pane lets us see an example result: it executes the entire data mapping starting with the original Select query, performs the API requests, processes the artificial intelligence results, and finally creates SQL update statements, generating a complete SQL update script:

We can use the Run SQL Script selection from the MapForce main menu to execute the script and apply the database updates interactively, or we can now deploy this mapping to a MapForce Server instance on our network, where it can be executed in an automated manner as part of a workflow of ingesting new products into the catalog. Using FlowForce Server we could define some triggers that automatically process the incoming data, validate it, then perform the AI-based image tagging, and lastly feed it into the production database.

Our requests to the Microsoft Azure Cognitive Services Computer Vision API used the Microsoft default pretrained vision model for this example. A custom vision model would be able to refine the tag results for specialized applications, but that goes beyond the scope of this blog post.

Click here for more information on any-to-any data mapping and conversion functionality available in MapForce, or click here to download a free fully-functional 30-day trial including Help files, tutorials, and many data mapping examples.